Introduction

Meta continues to push the boundaries of AI-driven recommendation systems, aiming to enhance user experiences and advertiser outcomes. To achieve the next leap in performance, the company is scaling its ad recommendation runtime models to the size and complexity of large language models (LLMs). This advancement enables a deeper understanding of user interests and intent. However, this scaling introduces a critical challenge known as the inference trilemma—balancing increased model complexity with the strict latency and cost demands of a global platform serving billions. Meta's answer is the Adaptive Ranking Model, a system that bends the inference scaling curve by delivering high ROI and industry-leading efficiency.

The Inference Trilemma

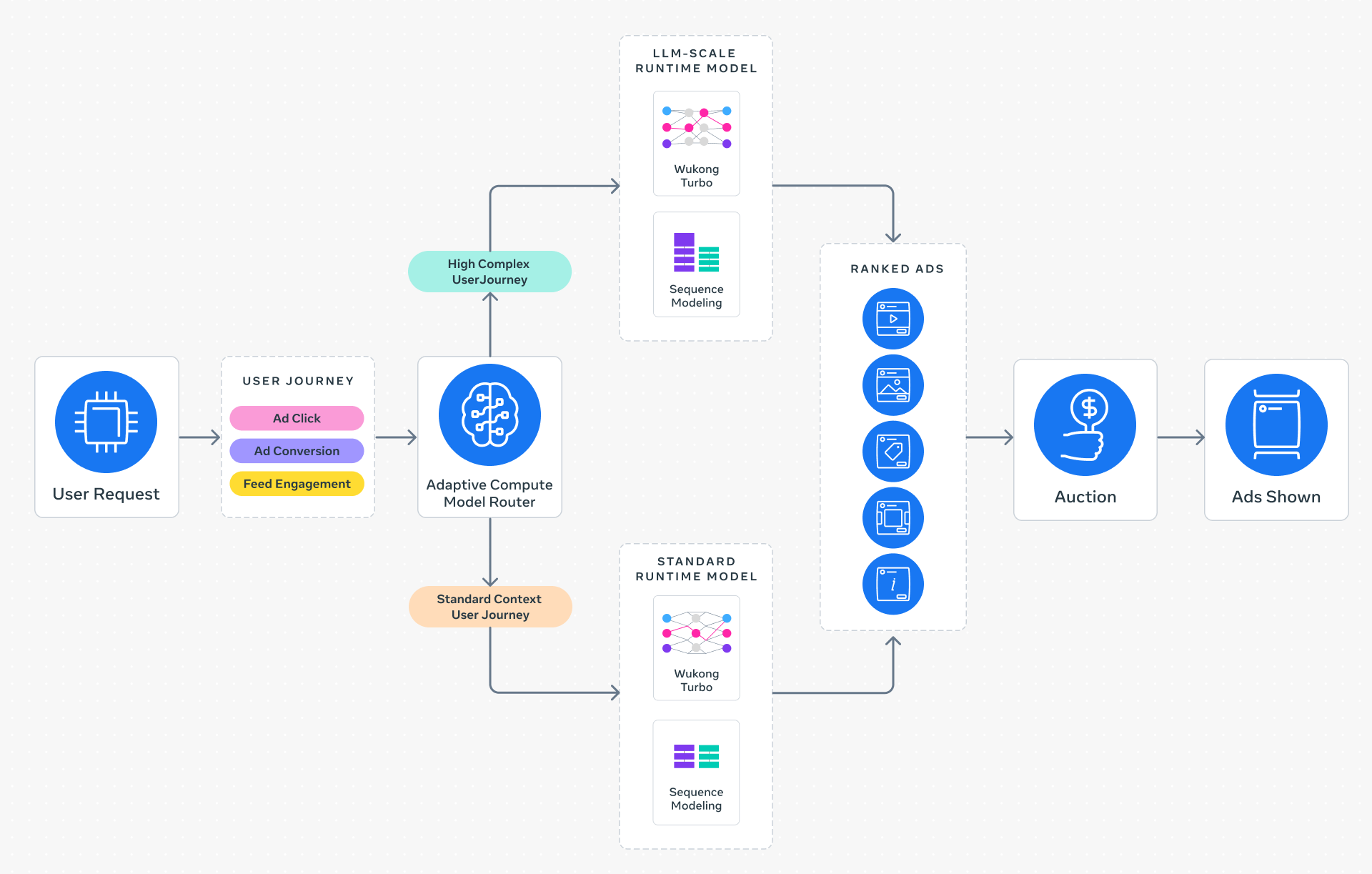

The inference trilemma arises from three conflicting requirements: model sophistication, low latency, and cost efficiency. As models grow to LLM scale, they demand more compute and memory, yet ad serving requires sub-second response times and economical operation. Traditional one-size-fits-all inference cannot satisfy all three simultaneously. Meta's adaptive approach solves this by intelligently routing each request to the most suitable model based on context and intent.

Adaptive Ranking: Dynamic Model Selection

The core innovation is replacing static inference with intelligent request routing. Instead of applying the same model to every query, the system analyzes a person's context—such as browsing history, device, and real-time behavior—to determine the optimal complexity level. This ensures every request is served by a model that balances effectiveness and efficiency, maintaining the platform's strict latency requirements while delivering a high-quality experience.

Three Key Innovations Behind Adaptive Ranking

Serving LLM-scale models at Meta's scale required rethinking the entire inference stack. Three innovations stand out:

1. Inference-Efficient Model Scaling

By adopting a request-centric architecture, the Adaptive Ranking Model processes each query with a complexity tailored to the user's needs. This allows a full LLM-scale model to run at sub-second latency, enabling nuanced understanding of interests without degrading the user experience. The system dynamically adjusts compute resources per request, rather than using a uniform allocation.

2. Model/System Co-Design

Meta developed hardware-aware model architectures that align model design with the capabilities and limitations of underlying hardware, including silicon. This co-design improves hardware utilization across heterogeneous environments—such as GPUs, custom accelerators, and CPUs—boosting efficiency without sacrificing accuracy. The result is a system that uses every watt effectively.

3. Reimagined Serving Infrastructure

Leveraging multi-card architectures and hardware-specific optimizations, the infrastructure supports models with approximately 1 trillion parameters (O(1T)). This unprecedented scaling for runtime recommendation models is achieved through parallelization and memory-efficient techniques, ensuring that even the largest models can be served cost-effectively in real time.

Measurable Results

The Adaptive Ranking Model has been live on Instagram since Q4 2025. Early metrics show a +3% increase in ad conversions and a +5% increase in ad click-through rate for targeted users. These gains come without a proportional rise in computational cost, thanks to efficient routing and hardware alignment. For advertisers, this means better returns; for users, more relevant ads. The system maintains system-wide efficiency while delivering superior performance for businesses of all sizes.

Conclusion

Meta's Adaptive Ranking Model represents a paradigm shift in serving LLM-scale models for advertising. By dynamically matching model complexity to user context, it resolves the inference trilemma and enables a deeper understanding of human intent. The integration of inference-efficient scaling, co-designed models, and reimagined infrastructure sets a new standard for real-time recommendation systems. As Meta continues to refine this approach, it paves the way for even more personalized and efficient ad experiences worldwide.