How Microsoft's MDASH AI System Discovered Critical Windows RCE Flaws: A Step-by-Step Breakdown

Introduction

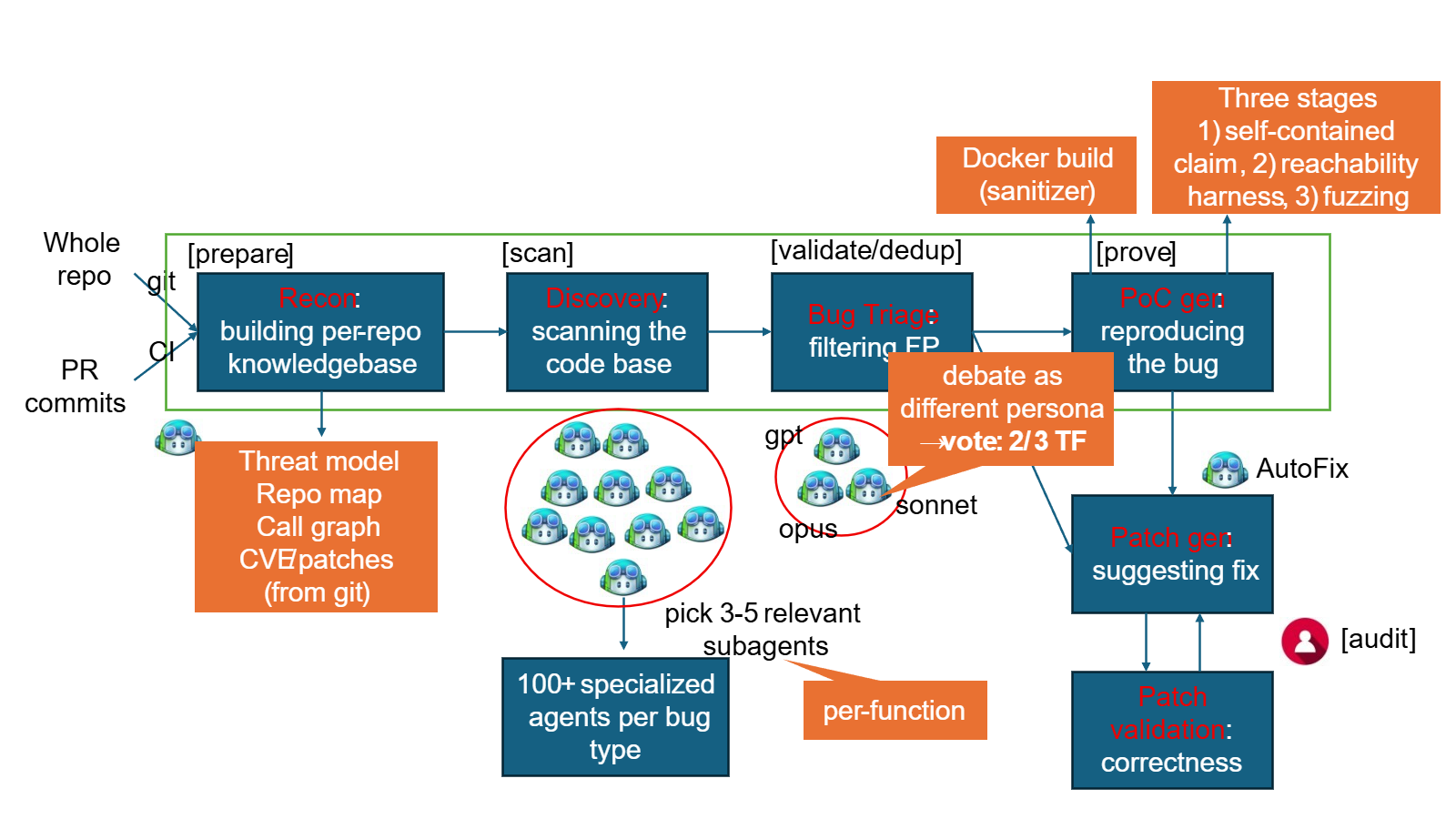

In a cybersecurity landscape where threats evolve daily, Microsoft recently demonstrated a groundbreaking approach to proactive defense. Their new Multimodal Agentic Scanning Harness (MDASH) — an artificial intelligence–powered vulnerability discovery system — autonomously uncovered 16 previously unknown flaws in Windows networking and authentication components. Among these, four critical remote code execution (RCE) bugs were patched in the latest Patch Tuesday release. This guide walks you through the precise methodology Microsoft's Autonomous Code Security team used, offering a blueprint for leveraging AI in vulnerability research. Whether you're a security professional, AI researcher, or IT administrator, understanding this process can help you think differently about threat hunting.

What You'll Need

- AI Models: Multiple agentic models (e.g., language models, anomaly detectors) capable of analyzing code and network behavior.

- Windows Source Code and Binaries: Access to Windows networking (e.g., TCP/IP stack) and authentication components.

- Patch Tuesday Updates: Familiarity with Microsoft's monthly security release cycle.

- Vulnerability Analysis Tools: Fuzzers, debuggers, and static analyzers for validation.

- Computational Infrastructure: Sufficient GPU/CPU resources to run AI scanning at scale.

- Security Expertise: Knowledge of RCE patterns, Windows internals, and common flaw classes (buffer overflows, improper input validation).

Step-by-Step Process

Step 1: Assemble the Multimodal Agentic Scanning Harness (MDASH)

Microsoft's team built MDASH as a coordinated swarm of specialized AI agents. Each agent has a unique role: one models normal network behavior, another analyzes code for memory safety issues, and a third cross-references against known vulnerability patterns. Start by defining the scope — here, Windows networking (e.g., WinSock, TCP/IP driver) and authentication (e.g., Kerberos, NTLM). Deploy these agents in a test environment mirroring production Windows systems.

Step 2: Configure Agentic Models for Concurrent Scanning

Each agent is fed the same target code but processes it differently. For example:

- Code Agent parses C/C++ source for dangerous function calls (e.g.,

strcpy) and checks for structure alignment. - Behavioral Agent monitors packet handling routines for unexpected states.

- Pattern Agent compares flows against known CVEs (e.g., CVE-2024-XXXX) using a vector database.

Step 3: Scan Windows Networking and Authentication Components

Focus the MDASH scan on the specific subsystems where 16 flaws were eventually found. Prioritize:

- Networking stacks: IPv4/IPv6 parsing, packet fragmentation, and connection state machines.

- Authentication libraries: Token verification, credential handling, and cryptographic operations.

- Remote procedure call (RPC) interfaces: A common attack surface.

Step 4: Analyze Detected Anomalies for RCE Potential

From the flagged anomalies, the AI teams up to assess exploitability. For the four critical RCE flaws:

- Severity scoring: Agents rate each flaw using CVSS 3.1 criteria (e.g., attack vector, complexity).

- Proof-of-concept generation: One agent attempts to craft a minimal exploit to confirm code execution.

- Impact assessment: Determine if the flaw allows remote unauthenticated access (as these four did).

- Cross‑validation: A separate validation agent re-runs tests to eliminate false positives.

The remaining 12 non‑critical flaws (elevation of privilege, information disclosure) are also documented but deprioritized.

Step 5: Validate and Document Findings for Patch Tuesday

Microsoft follows a strict internal disclosure process. The team:

- Creates detailed bug reports with steps to reproduce.

- Assigns each flaw a unique identifier for tracking.

- Works with the Windows engineering team to develop patches.

- Times the release to coincide with Patch Tuesday — the second Tuesday of each month — ensuring consistent deployment.

In this cycle, all 16 flaws were reported to Microsoft's Security Response Center (MSRC), and patches were issued in the same Patch Tuesday bulletin.

Step 6: Post-Discovery Review and Model Refinement

After patches are released, the MDASH team analyzes the success rate and false positive ratio. This feedback loop improves the AI models for future scans. They also share anonymized insights with the security community (within responsible disclosure bounds). For example, the specific pattern that found these RCE flaws may be encoded into a new agent to hunt similar weaknesses in other components.

Tips for Applying This Approach

- Start small: Begin with a single, well-understood component (like a DHCP client) before scaling.

- Diversity of models matters: Use different architectures (transformers, graph neural nets, rule‑based) to cover blind spots.

- Integrate with existing tools: Combine AI scanning with traditional fuzzers (like WinAFL) for deeper coverage.

- Keep human oversight: AI may miss context; always have a security researcher review critical findings.

- Respect disclosure timelines: Ensure any discovered flaws are reported through official channels before public release.

By following these steps, security teams can replicate Microsoft's success in proactively identifying high‑impact vulnerabilities — before attackers do.

Related Articles

- How to Defend Against MuddyWater’s Microsoft Teams Credential Theft and False Flag Ransomware Tactics

- 7 Critical Facts About the DarkSword iOS Exploit Chain

- Android System RCE Vulnerability: 5 Essential Details You Must Know

- Black Duck and Docker Hardened Images Integration Cuts Container Security Noise by 80%, Experts Say

- How Zero-Day Supply Chain Attacks Are Redefining Cybersecurity Defenses

- Massive Open-Source Package Element-Data Hijacked: Credential Theft Hits 1 Million Monthly Users

- 10 Fascinating Facts About the Apple Lisa FPGA Recreation

- AWS Reveals 2026 Heroes Cohort: Three Visionaries Driving Cloud Innovation Across Continents