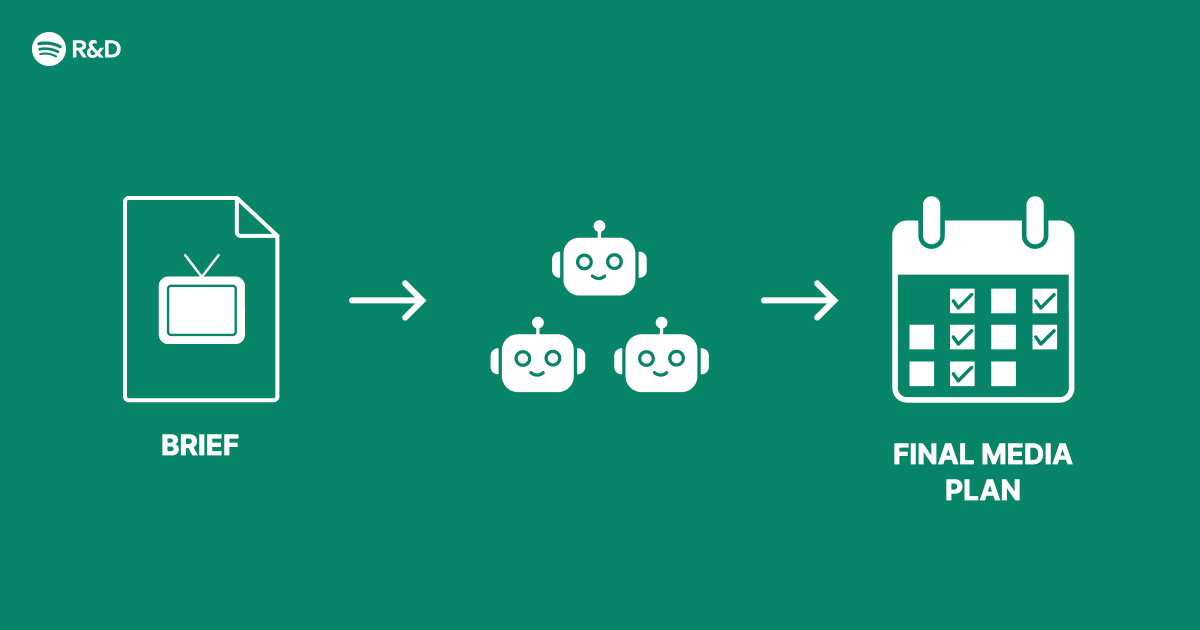

Building a Multi-Agent System for Smarter Ad Optimization

Introduction

Modern advertising requires real-time decision-making across multiple channels, user segments, and creative variations. A multi-agent architecture distributes these tasks among specialized AI agents that collaborate to improve campaign performance. This guide walks you through designing and deploying such a system, from initial setup to continuous optimization, based on proven patterns used in production environments.

What You Need

- Data pipeline: Streaming platform (e.g., Apache Kafka, AWS Kinesis) for real-time click, impression, and conversion events

- Machine learning framework: TensorFlow, PyTorch, or similar for training agent models

- Orchestration layer: Kubernetes or a serverless architecture to manage agent lifecycle

- State storage: Distributed database (e.g., Cassandra, Redis) for sharing agent context

- Ad serving infrastructure: API endpoints for bid requests and ad delivery

- Monitoring & logging: Prometheus, Grafana, ELK stack for observability

- Expertise: Team experience with reinforcement learning, multi-agent systems, and online advertising

Step 1 – Define Agent Roles and Objectives

Identify distinct responsibilities that can be handled independently. Common agents in advertising include:

- Bid Agent: optimizes bid price for each impression

- Creative Agent: selects best ad format, copy, and image for a given user

- Budget Agent: allocates daily budget across campaigns and time blocks

- Segment Agent: groups users into high-value clusters for targeting

For each agent, specify its action space (e.g., possible bid amounts), state space (observable features), and reward function (e.g., click-through rate, conversion rate, cost per acquisition). Document interaction patterns – which agents share information and how conflicts are resolved.

Step 2 – Design the Communication Protocol

Agents must coordinate without creating a centralized bottleneck. Use a publish-subscribe message bus with defined topics. For instance:

- Bid Agent publishes final bid on

bidstopic - Budget Agent subscribes to

bidsto update remaining budget - Creative Agent subscribes to

user-segmenttopics to pre-fetch creative variants

Include a shared agent state store for long-term context (e.g., user frequency caps, creative fatigue). Define message schemas (Avro, Protobuf) to ensure compatibility. Set up a dead-letter queue for failed messages to maintain reliability.

Step 3 – Implement Agent Logic

Each agent can be a separate microservice with its own ML model. Use reinforcement learning (e.g., PPO, DQN) for agents that learn online from feedback. For dependencies:

- Train agents offline using historical data to initialize policies.

- Deploy agents in a shadow mode (log predictions without serving) to validate.

- Gradually shift to live traffic using A/B testing for each agent.

Agents may need to trade off exploration vs. exploitation. Use epsilon-greedy or Thompson sampling per agent. Ensure agents cannot destabilize the system – implement safety checks like max bid caps and budget overrun protection.

Step 4 – Build the Orchestration Layer

Manage agent lifecycles with Kubernetes. Each agent runs as a deployment with autoscaling based on request latency. For high availability, replicate agents across zones. Use a coordination service (e.g., ZooKeeper, etcd) to elect a leader for write-sensitive tasks (like overriding budget allocations). The orchestration layer should:

- Restart agents on failure with preserved state

- Roll out new agent versions with canary deployments

- Expose health endpoints for liveness/readiness probes

Step 5 – Integrate with Ad Serving Pipeline

Connect agents to real-time bid requests. When an ad opportunity arrives:

- Segment Agent classifies user ID into one or more segments.

- Creative Agent selects the best ad variant based on user segment and context.

- Bid Agent determines the maximum bid price using segment features and budget constraints.

- Budget Agent checks remaining daily spend and approves or adjusts the bid.

- The final bid is sent to the ad exchange.

Timeouts are critical – each agent must respond within milliseconds. Use asynchronous processing and caching for frequent lookups. Log every decision for later model retraining and auditing.

Step 6 – Implement Feedback Loops

Agents need to learn from outcomes (clicks, conversions, no-action). Set up a reward computation service that hooks into the conversion tracking system. When a conversion is recorded after an ad served, the reward is attributed to the correct agent actions. Use a time-decay attribution window (e.g., 1 hour for clicks, 30 days for conversions).

Store rewards in the agent state store. Each agent periodically pulls recent experiences and updates its model. For online learning, use a replay buffer and mini-batch updates to avoid catastrophic forgetting. Implement a fallback: if no learning is available, fall back to a rule-based policy.

Step 7 – Monitor and Optimize

Track key metrics for each agent and the overall system:

- Agent-level: bid success rate, creative CTR, budget utilization, reward convergence

- System-level: overall cost per acquisition, revenue lift vs. baseline, latency percentiles

Use dashboards to visualize agent interactions. Set up alerts for anomalies (e.g., reward suddenly drops, budget overspent). Periodically retrain agents on fresh data or incorporate online learning as described. Run regular A/B tests of the multi-agent system against a single centralized agent to validate improvement.

Tips

- Start simple: Begin with only two agents (bid and budget) before adding complexity.

- Simulate before deploying: Build a simulator that replays historical traffic to test agent behavior.

- Watch for emergent behaviors: Agents may “collude” (e.g., bid higher to exhaust budget early) – enforce sanity checks.

- Keep state atomic: Use transactions when updating global state like budget to avoid race conditions.

- Plan for scaling: Your architecture should handle 10x the current traffic without redesign.

- Document agent decisions: For compliance and debugging, log why each decision was made.

With these steps, you can build a robust multi-agent advertising system that continuously adapts and improves. For more details, refer to Multi-Agent Reinforcement Learning for Online Advertising (Spotify Engineering Blog) and related literature.

Related Articles

- Budweiser Launches ‘Great Delivery’ Campaign for Dual 150th and America’s 250th Anniversary

- The Role of Humility in Design and Beyond

- Massive Phishing Wave Using Trusted Remote Access Tools Hits Over 80 US Organizations

- How to Build an Amiable Online Community: Lessons from the Vienna Circle

- Fostering Amiability Online: Lessons from the Vienna Circle

- Ultrahuman Ring PRO Returns to Kickstarter with Enhanced Features and Battery Life

- 6 Key Insights Into Facebook's Revamped Groups Search for Community Knowledge

- Transforming Utility Software: From Chore to Desire