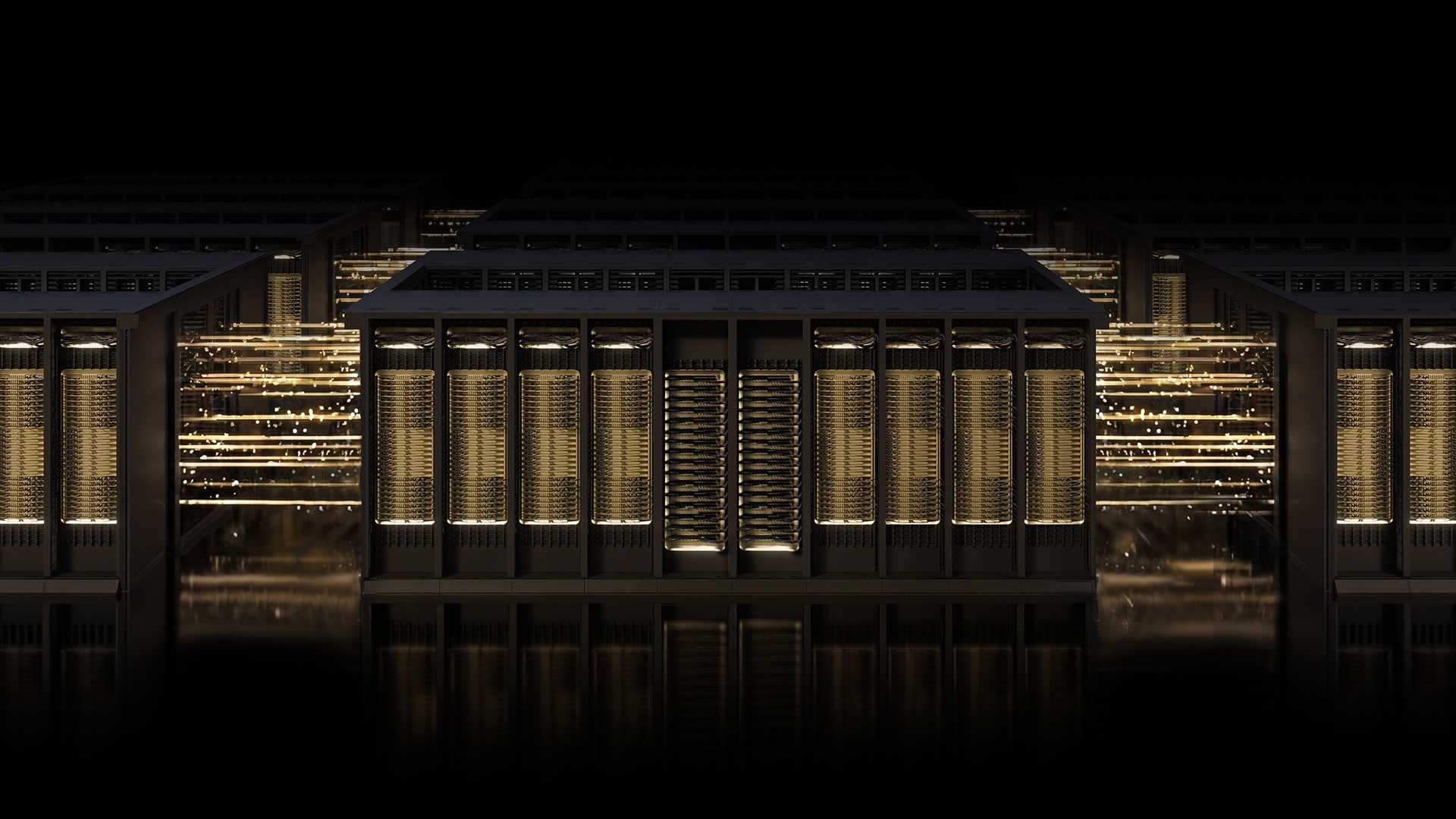

NVIDIA Spectrum-X Ethernet with MRC Protocol Breaks Through AI Networking Barriers

Breaking: NVIDIA Spectrum-X Ethernet with MRC Protocol Breaks Through AI Networking Barriers

In a landmark development for AI infrastructure, NVIDIA, Microsoft, and OpenAI have unveiled the Multipath Reliable Connection (MRC) transport protocol, now open-sourced through the Open Compute Project. MRC enables a single RDMA connection to distribute traffic across multiple network paths, dramatically improving throughput, load balancing, and availability for gigascale AI training.

Deployed by industry giants including OpenAI, Microsoft, and Oracle, MRC is already powering some of the world's largest AI factories. The protocol, optimized on NVIDIA Spectrum-X Ethernet hardware, has proven critical for maintaining GPU utilization and avoiding network-related slowdowns during frontier training runs.

“Deploying MRC in the Blackwell generation was very successful and was made possible by a strong collaboration with NVIDIA,” said Sachin Katti, head of industrial compute at OpenAI. “MRC’s end-to-end approach enabled us to avoid much of the typical network-related slowdowns and interruptions and maintain the efficiency of frontier training runs at scale.”

Background: The AI Networking Challenge

The race to build the world’s most powerful AI factories demands networking that keeps pace with the ambitions of AI itself. Traditional Ethernet fabrics struggle with congestion, packet loss, and load imbalance, causing GPU idle time and slowing model training.

NVIDIA Spectrum-X Ethernet scale-out infrastructure was designed to overcome these limitations. It combines purpose-built hardware, deep telemetry, and intelligent fabric control to deliver the performance, resilience, and scale required for gigascale AI.

Think of MRC as replacing a single-lane road spanning a town with a cleverly laid-out street grid system paired with an on-the-fly traffic app, enabling drivers to reroute around slowdowns and road closures.

Key Features of MRC

- Multi-Path Load Balancing: Distributes traffic across all available paths, ensuring every GPU gets the bandwidth it needs throughout a training run.

- Dynamic Congestion Avoidance: Sustains high bandwidth by automatically diverting traffic from overloaded paths in real time.

- Intelligent Retransmission: Enables rapid, precise recovery from data loss, minimizing impact on long-running jobs and reducing GPU idle time.

- Fine-Grained Visibility: Administrators gain granular control over traffic paths, simplifying operations and accelerating troubleshooting.

Deployment and Impact

Microsoft’s Fairwater and Oracle Cloud Infrastructure’s (OCI) Abilene data center—two of the largest AI factories purpose-built for training and deploying leading-edge frontier LLMs—rely on MRC to deliver on performance, scale, and efficiency requirements. NVIDIA Spectrum-X Ethernet provides the network foundation needed to run large-scale AI models with confidence.

Proven first in production with performance optimized on NVIDIA Spectrum-X Ethernet hardware, MRC is now released as an open specification through the Open Compute Project. This move democratizes access to the protocol, allowing the broader AI community to benefit from its capabilities.

What This Means

The open-source release of MRC marks a turning point for AI networking. By eliminating network bottlenecks that have historically limited scaling, MRC enables organizations to achieve higher GPU utilization, faster training times, and more reliable operation across massive clusters.

For hyperscalers and AI labs, this translates into lower costs per model, faster time to deployment, and the ability to push the boundaries of what’s possible with frontier AI. For the industry at large, it establishes a new standard for gigascale Ethernet infrastructure—open, AI-native, and proven in production.

Bottom line: The MRC protocol, combined with NVIDIA Spectrum-X, is setting the benchmark for AI networking, and its open specification ensures that the entire ecosystem can build on this innovation.

Related Articles

- 7 Key Insights for Building a High-Performance Telegram Video Downloader with MTProto

- Enhancing Man Pages with Practical Examples: A Look at tcpdump and dig

- 10 Things You Need to Know About the Smartphone Price Hikes Hit OnePlus, Nothing, and More

- Unearthing Ancient Trade: How Spanish Bronze Age Mines Solved a Scandinavian Metal Mystery

- Building a High-Performance Telegram Media Downloader: Inside MTProto and Async I/O

- How to Build a Cost-Effective Home Network Without Falling for Marketing Lies

- Improving Man Pages: Incorporating Cheat Sheets and Better Organization

- Utah Enforces Landmark Law Making Websites Liable for VPN-Aided Age Verification Bypasses